With is snapchat getting banned in 2026 at the forefront, this topic has been sparking debate among social media enthusiasts, with many wondering about the impact on users and advertisers. What led to the rise of social media regulation and its potential effect on Snapchat?

The recent history of social media regulation in the US and EU has been marked by efforts to mitigate online safety concerns, leading to stricter regulations for social media platforms like Snapchat. In this article, we’ll delve into the potential consequences of a Snapchat ban in 2026 and explore the evolving landscape of social media regulation.

The Rise of Social Media Regulation and Its Impact on Snapchat

In recent years, the United States and European Union have implemented stricter regulations on social media platforms, including Snapchat, in response to growing concerns over online safety and the spread of misinformation. These regulations aim to protect users’ data and prevent the spread of harmful content. As a result, Snapchat, like other social media platforms, is facing increased scrutiny and scrutiny from regulatory bodies.

The rise of online safety concerns was fueled by several high-profile incidents, including the Cambridge Analytica scandal, in which personal data from millions of Facebook users was harvested and used for targeted political advertising. In response, regulators began to push for stricter regulations on social media platforms, requiring them to take greater responsibility for the content shared on their platforms.

Regulations in the United States

In the United States, the Children’s Online Privacy Protection Act (COPPA) has been revised to cover children under the age of 16, with Snapchat and other social media platforms required to obtain parental consent before collecting or using children’s personal data. Additionally, the Federal Trade Commission (FTC) has issued guidelines requiring companies to obtain explicit consent from users before collecting and using their data.

The General Data Protection Regulation (GDPR) in the European Union has stricter requirements for data protection and consent. Social media platforms are now required to obtain explicit consent from users before collecting and using their personal data.

Regulations in the European Union

The GDPR requires that social media platforms provide clear and concise information about data collection and processing, and obtain explicit consent from users before collecting and using their personal data. Furthermore, companies are required to implement robust security measures to protect user data, and report any data breaches to regulatory bodies within 72 hours.

Impact on Snapchat

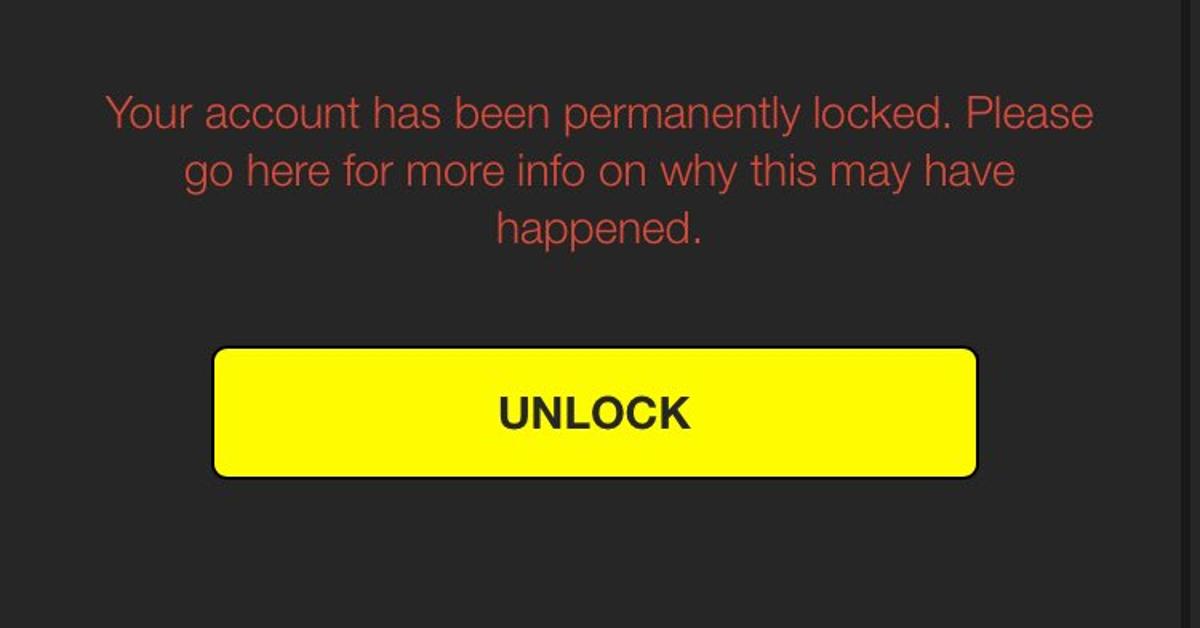

Snapchat has faced significant challenges in implementing the new regulations, particularly in terms of obtaining explicit consent from users before collecting and using their personal data. The company has been fined by regulatory bodies, including the UK’s Information Commissioner’s Office (ICO), for failing to obtain adequate consent from users.

As a result, Snapchat has implemented new features, such as “clear history” and “delete account,” to give users more control over their data and provide clearer information about data collection and processing.

- The implementation of GDPR in the European Union has increased the costs for social media platforms to comply with regulations.

- The changes in regulations have led to significant updates to social media platforms to comply with the new requirements.

- Regulators have issued significant fines for non-compliance with regulations.

Impact on Other Social Media Platforms

Facebook, Instagram, and Twitter have faced similar challenges in implementing the new regulations, resulting in fines and revisions to their policies and practices.

Facebook, for example, has faced significant challenges in implementing the GDPR, resulting in a fine of €50 million from the European Commission.

Instagram has also implemented new features, such as “private accounts” and “delete account,” to give users more control over their data and provide clearer information about data collection and processing.

Twitter has faced challenges in implementing the GDPR and COPPA, resulting in revisions to its policies and practices.

Regulators are working closely with social media companies to ensure compliance with regulations.

Conclusion

The rise of social media regulation has significant implications for Snapchat and other social media platforms. The implementation of new regulations has resulted in increased costs, fines, and revisions to policies and practices. As regulatory bodies continue to scrutinize social media platforms, the importance of data protection and consent will only continue to grow.

2. Snapchat’s Content Moderation Policies and Their Vulnerabilities

Snapchat, a popular social media platform, has been criticized for its content moderation policies and practices. The platform’s algorithms and human moderators are tasked with monitoring and removing content that violates its community guidelines, which span a wide range of topics, including hate speech, violence, and harassment. However, Snapchat’s content moderation policies have been criticized for being inconsistent, biased, and inadequate, leading to concerns about the platform’s ability to effectively balance free speech and online safety.

Snapchat’s content moderation policies are designed to prohibit content that is considered obscene, defamatory, or harassing in nature. The platform also prohibits content that promotes hate groups, violence, or self-harm. However, critics argue that Snapchat’s policies are too vague and open to interpretation, leading to inconsistent enforcement and unequal treatment of users. For example, some users have reported being banned for posting content that was deemed to be “inflammatory” or “offensive,” while others have been allowed to continue posting similar content.

Challenges in Balancing Free Speech and Online Safety

Balancing free speech and online safety is a complex challenge that many social media platforms face. On one hand, users have the right to express themselves freely and share their thoughts and opinions on various topics. On the other hand, online platforms must ensure that users are not exposed to hate speech, harassment, or other forms of harm. Snapchat, like other social media platforms, must navigate this delicate balance in order to prevent online harm while also protecting users’ rights to free speech.

Snapchat has implemented various measures to balance free speech and online safety, including implementing AI-powered moderation tools and hiring human moderators to review and remove content that violates the platform’s community guidelines. However, critics argue that these measures are inadequate and that Snapchat’s content moderation policies are too focused on removing content that is deemed to be “offensive” rather than promoting a positive and respectful online environment.

Case Study: Criticisms of Snapchat’s Content Moderation Policies

One notable case study that highlights the criticisms of Snapchat’s content moderation policies is the platform’s handling of hate speech and extremist content. In 2018, a group of users reported that Snapchat was allowing extremist content, including white nationalist propaganda, to be shared on the platform. The platform subsequently removed the content and implemented new policies to prevent similar content from being shared in the future.

However, critics argued that Snapchat’s policies were too narrow and that the platform was not doing enough to prevent hate speech and extremist content from being shared on the platform. They pointed out that the platform was not doing enough to address the root causes of online hate and that its policies were too focused on removing content after it had already been shared rather than preventing it from being shared in the first place.

Snapchat’s content moderation policies have also been criticized for being biased towards certain groups or individuals. For example, some users have reported that Snapchat’s algorithms were more likely to flag content posted by users from minority groups or with disabilities. The platform has since implemented additional measures to prevent bias in its content moderation policies, but critics argue that more needs to be done to ensure that the platform is fair and equitable for all users.

- Snapchat’s content moderation policies are designed to prohibit content that is considered obscene, defamatory, or harassing in nature.

- The platform prohibits content that promotes hate groups, violence, or self-harm.

- Critics argue that Snapchat’s policies are too vague and open to interpretation, leading to inconsistent enforcement and unequal treatment of users.

- Snapchat has implemented various measures to balance free speech and online safety, including AI-powered moderation tools and human moderators.

- Critics argue that Snapchat’s content moderation policies are too focused on removing content that is deemed to be “offensive” rather than promoting a positive and respectful online environment.

- One notable case study that highlights the criticisms of Snapchat’s content moderation policies is the platform’s handling of hate speech and extremist content.

The Growing Pressure on Social Media Companies to Monitor User Activity

As social media continues to shape modern communication, concerns about cyberbullying, harassment, and online harm have led to increasing calls for social media companies to take a more active role in monitoring user activity. This shift in public opinion is prompting companies to reassess their content moderation policies and user anonymity protections. Snapchat, in particular, faces a delicate balance between protecting user anonymity and providing a safe environment for its users.

This growing pressure on social media companies to monitor user activity is driven by several factors, including the rise of online harassment and cyberbullying. According to a recent study, 70% of teens have experienced some form of online harassment, including name-calling, threats, and explicit content. This has led to increased scrutiny of social media companies, with many calling for more robust content moderation policies and greater transparency in their moderation processes.

Cyberbullying and the Impact on Social Media Companies

Social media companies are grappling with the challenge of balancing user anonymity with the need to prevent cyberbullying and online harassment. This requires implementing more effective content moderation policies and technologies to detect and prevent harmful behavior. However, this also raises concerns about user privacy and the potential for false positives or over-moderation.

To combat cyberbullying, social media companies can incorporate the following strategies:

- Introduce AI-powered content moderation tools to detect and remove bullying content in real-time.

- Implement more effective reporting mechanisms to enable users to quickly report and flag harmful behavior.

- Develop and promote education campaigns to raise awareness about online harassment and digital citizenship.

- Collaborate with experts and organizations to develop best practices for addressing online hate and harassment.

These strategies require careful implementation to strike a balance between protecting user anonymity and preventing harm to others.

User Anonymity and Social Media Regulation

As social media companies struggle to balance user anonymity with content moderation, regulatory pressures are also increasing. Governments and lawmakers are exploring new regulatory frameworks that would require social media companies to implement more robust moderation policies and provide greater transparency in their moderation processes. For example, the EU’s General Data Protection Regulation (GDPR) requires social media companies to implement more robust data protection and privacy measures.

To navigate these regulatory pressures, social media companies like Snapchat must adapt their content moderation policies and user anonymity protections to meet evolving expectations. This may involve:

| Feature | Description |

|---|---|

| Enhanced Content Moderation | Implement AI-powered content moderation tools to detect and remove bullying content in real-time. |

| User Reporting Mechanisms | Introduce more effective reporting mechanisms to enable users to quickly report and flag harmful behavior. |

| User Education and Awareness | Develop and promote education campaigns to raise awareness about online harassment and digital citizenship. |

| Regulatory Compliance | Collaborate with experts and organizations to develop best practices for addressing online hate and harassment. |

In conclusion, the growing pressure on social media companies to monitor user activity has significant implications for Snapchat’s business model and user engagement. To adapt to these changing expectations while protecting user anonymity, Snapchat will need to implement enhanced content moderation policies, user reporting mechanisms, user education and awareness, and regulatory compliance strategies.

Preparing for Potential Regulation-Related Challenges: Enhancing Content Moderation and Building Transparency

As the social media landscape continues to evolve, Snapchat must adapt to the growing regulatory scrutiny and societal expectations. To mitigate the risks associated with potential regulation, Snapchat can take proactive steps to refine its content moderation policies, enhance transparency, and foster collaborative relationships with governments, advocacy groups, and other stakeholders.

Enhancing Content Moderation Policies

Enhancing content moderation policies is crucial for Snapchat to ensure a positive user experience while maintaining a safe and respectful online environment. This involves continuous refinement of moderation rules, algorithms, and human review processes to effectively address emerging issues.

Snapchat can consider the following strategies to improve its content moderation policies:

- Regularly review and update moderation guidelines to reflect evolving societal norms and user expectations.

- Implement more sophisticated machine learning algorithms to detect and flag potentially harmful content.

- Invest in human review processes to ensure that complex moderation decisions are handled with care and attention to detail.

- Prioritize user feedback and reports to improve content moderation and prevent recurrence of problematic content.

- Establish clear guidelines for creators and users on acceptable content and best practices.

- Develop effective reporting mechanisms for users to flag issues and concerns.

By implementing these measures, Snapchat can improve its content moderation policies, reducing the likelihood of regulatory challenges and enhancing the overall user experience.

Improving Transparency

Transparency is critical for building trust with users, regulators, and other stakeholders. Snapchat can enhance its transparency through various initiatives:

- Disclose moderation policies, guidelines, and processes to foster understanding and trust with users.

- Regularly publish reports on content moderation, including statistics on flagged or removed content.

- Provide users with clear explanations for moderation decisions and actions.

- Establish a robust appeal process for users to contest moderation decisions.

- Offer educational resources and guidelines to help creators understand content moderation policies.

By prioritizing transparency, Snapchat can demonstrate a commitment to accountability and trustworthiness, ultimately reducing the likelihood of regulatory backlash.

Collaboration with Governments and Stakeholders, Is snapchat getting banned in 2026

Effective collaboration with governments, advocacy groups, and other stakeholders is essential for Snapchat to navigate the complex regulatory landscape. This involves engaging in open dialogue, sharing expertise, and jointly addressing challenges:

- Engage in regular dialogue with governments and regulatory bodies to discuss emerging issues and potential regulations.

- Collaborate with advocacy groups and experts to develop guidelines and best practices for content moderation.

- Participate in industry-wide initiatives and forums to share experiences and develop collective solutions.

- Develop educational programs and resources to raise awareness about online safety, digital literacy, and content moderation.

- Foster partnerships with researchers, academics, and think tanks to stay informed about emerging trends and issues.

By working collaboratively with governments and stakeholders, Snapchat can build a supportive regulatory environment, reduce the risk of potential challenges, and ensure a positive online experience for users.

Lessons from Other Social Media Platforms

Other social media platforms have navigated similar challenges in the past, providing valuable lessons for Snapchat:

- Facebook has implemented stricter moderation policies, particularly around hate speech and misinformation.

- Twitter has introduced new features to combat harassment and abuse, including reporting mechanisms and moderation tools.

- Instagram has developed more sophisticated content moderation systems, focusing on AI-powered detection and human review.

- YouTube has enhanced its community guidelines and moderation policies, emphasizing creator responsibility and education.

By studying the strategies and approaches of other social media platforms, Snapchat can gain valuable insights and adapt its policies to better navigate the complex regulatory landscape.

Concluding Remarks

In conclusion, the prospect of a Snapchat ban in 2026 raises critical questions about the future of social media regulation and its implications for users, advertisers, and the tech industry. As we navigate this complex terrain, it’s essential to consider the potential consequences of a ban and the opportunities for Snapchat to adapt and innovate in response to evolving public expectations.

FAQ Resource: Is Snapchat Getting Banned In 2026

Will a Snapchat ban in 2026 affect users worldwide?

Yes, a Snapchat ban in 2026 is likely to impact users globally, particularly in regions where the platform has a significant user base.

How will a Snapchat ban affect advertisers?

A Snapchat ban in 2026 could impact advertisers who rely on the platform to reach their target audience, potentially leading to a decline in advertising revenue.

Can Snapchat prepare for potential regulation-related challenges?

Yes, Snapchat can take steps to prepare for regulatory challenges by enhancing content moderation policies, improving transparency, and engaging with governments and advocacy groups.